Hi, this is Meng CHU (褚蒙, same pronunciation as Trueman)’s website!

I am a second-year Ph.D. student at Hong Kong University of Science and Technology (HKUST), advised by Prof. Jiaya Jia.

Before that, I obtained my Master’s degree from National University of Singapore (NUS) with Distinction, supervised by Prof. Tat-Seng Chua and Prof. Zhedong Zheng.

My research focuses on Multimodal Learning, Agentic AI, and Large Language Models.

I am passionate about building intelligent systems that can understand and interact with the world.

If you are interested in collaboration, please feel free to contact me via Email.

🔥 News

- 2026.03: 🎉🎉 One paper accepted to CVPR 2026!

- 2026.01: 🎉🎉 One paper accepted to AAAI 2026 as Oral Presentation!

- 2025.07: 🎉🎉 One paper accepted to ICCV 2025!

- 2025.04: 🎉🎉 One paper accepted to ACM MM 2025!

- 2024.09: 🎉🎉 Started my Ph.D. journey at HKUST!

- 2024.07: 🎉🎉 One paper accepted to ECCV 2024!

📝 Publications

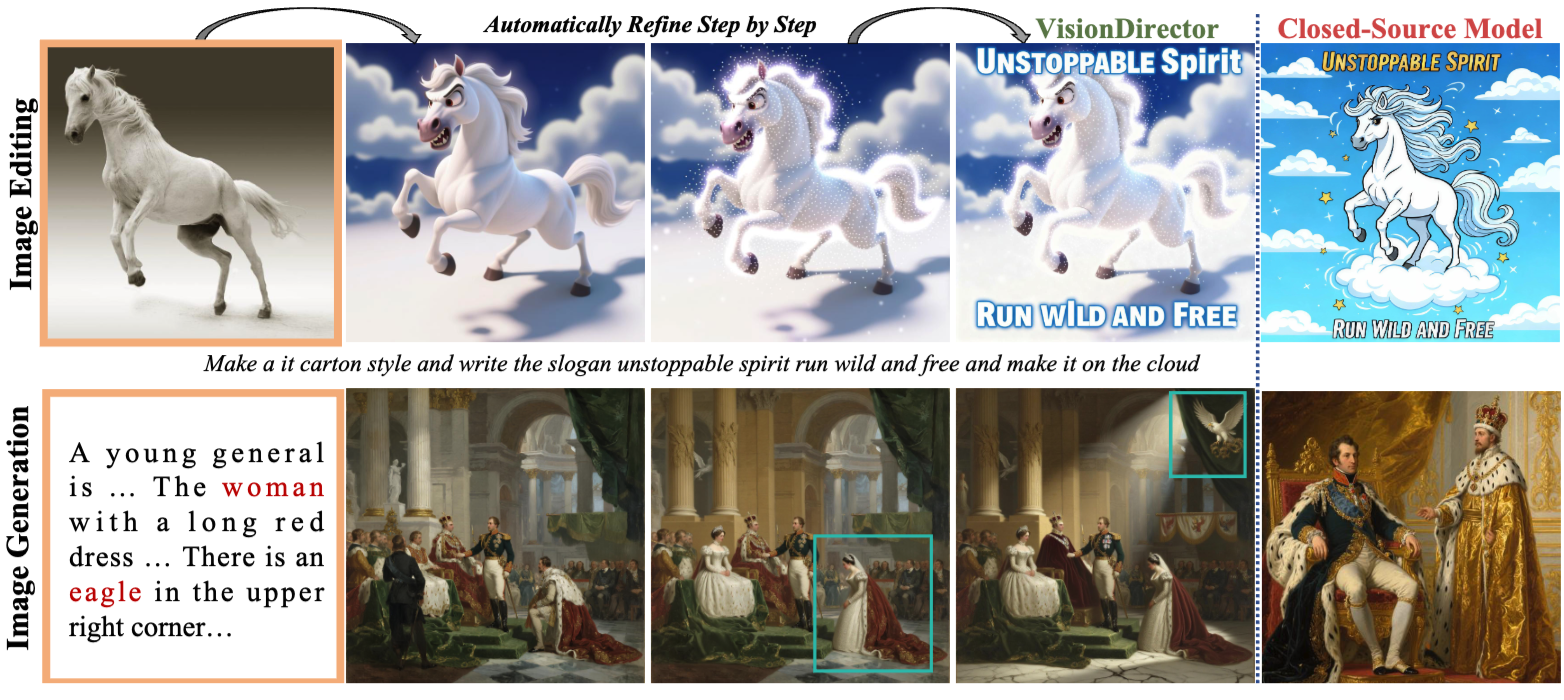

VisionDirector: Vision-Language Guided Closed-Loop Refinement for Generative Image Synthesis

Meng Chu, Senqiao Yang, Haoxuan Che, et al., Jiaya Jia

- LGBench - 2,000 tasks with 29,000+ structured goals.

- Training-free - VLM-driven closed-loop refinement.

- 26% Reduction - Editing rounds reduced via GRPO.

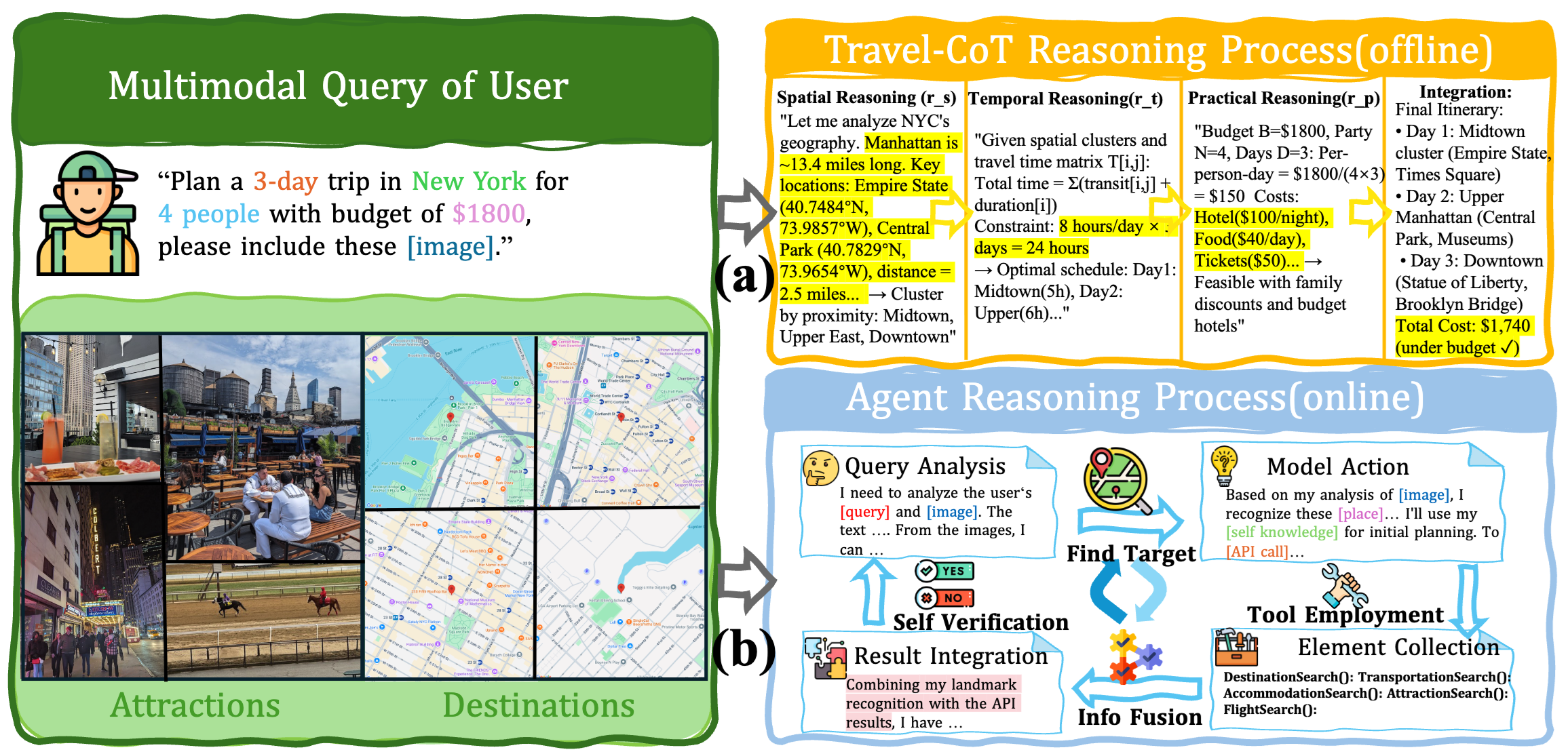

TraveLLaMA: A Multimodal Travel Assistant with Large-Scale Dataset and Structured Reasoning

Meng Chu, Yukang Chen, Haokun Gui, Shaozuo Yu, Yi Wang, Jiaya Jia

- TravelQA Dataset - 265K QA pairs across 35+ cities worldwide.

- Travel-CoT Reasoning - 10.8% accuracy improvement with structured reasoning.

- User Study - SUS score of 82.5 (Excellent) with 500 participants.

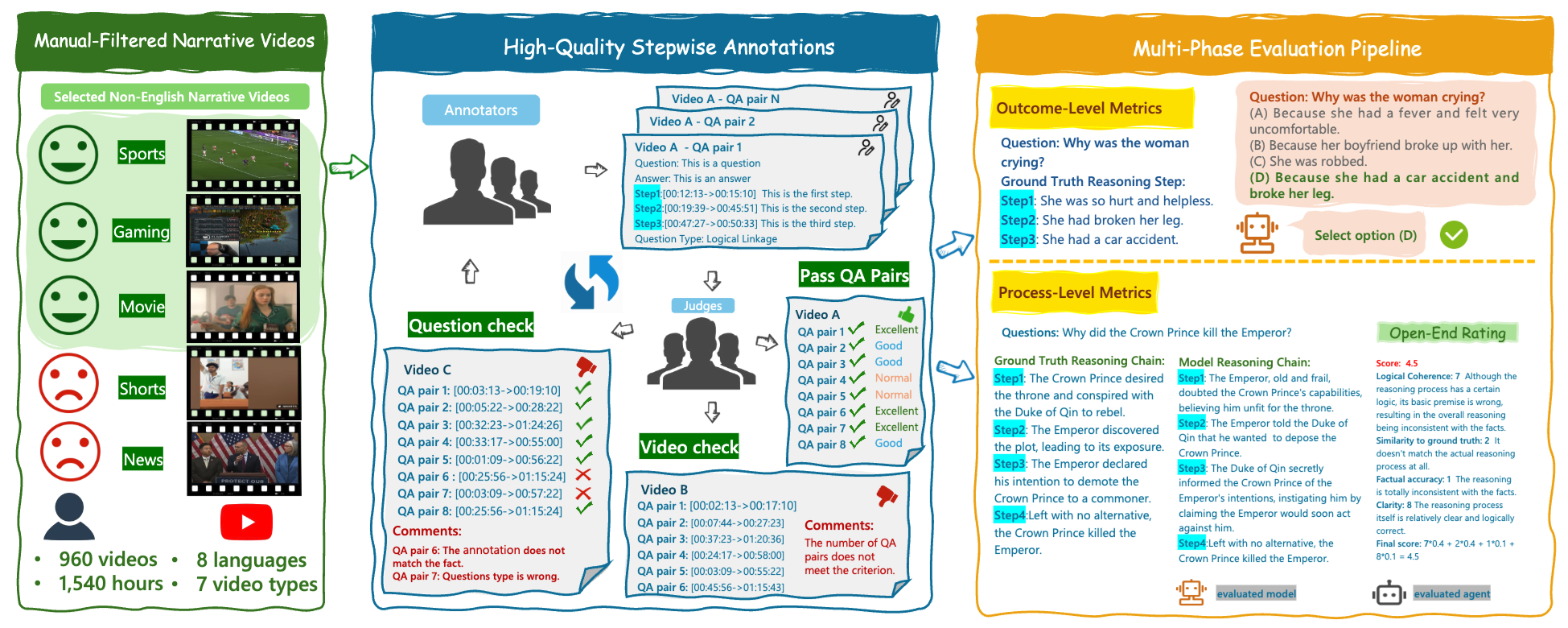

VRBench: A Benchmark for Multi-Step Reasoning in Long Narrative Videos

Jiashuo Yu*, Yue Wu*, Meng Chu*, Zhifei Ren*, Zizheng Huang*, et al.

- Long Narrative Videos - 960 videos with avg. 1.6 hours duration.

- Multi-step Reasoning - 8,243 QA pairs with 25,106 reasoning steps.

- Multi-phase Evaluation - Outcome and process level assessment.

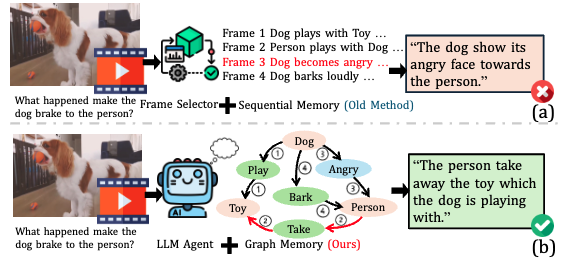

GraphVideoAgent: Understanding Long Videos via LLM-Powered Entity Relation Graphs

Meng Chu, Yicong Li, Tat-Seng Chua

- Graph-based Memory - Track entities and relations across long videos.

- Efficient Reasoning - 56.3%/73.3% accuracy with only 8.2 frames on average.

- Temporal Understanding - Long-form video comprehension.

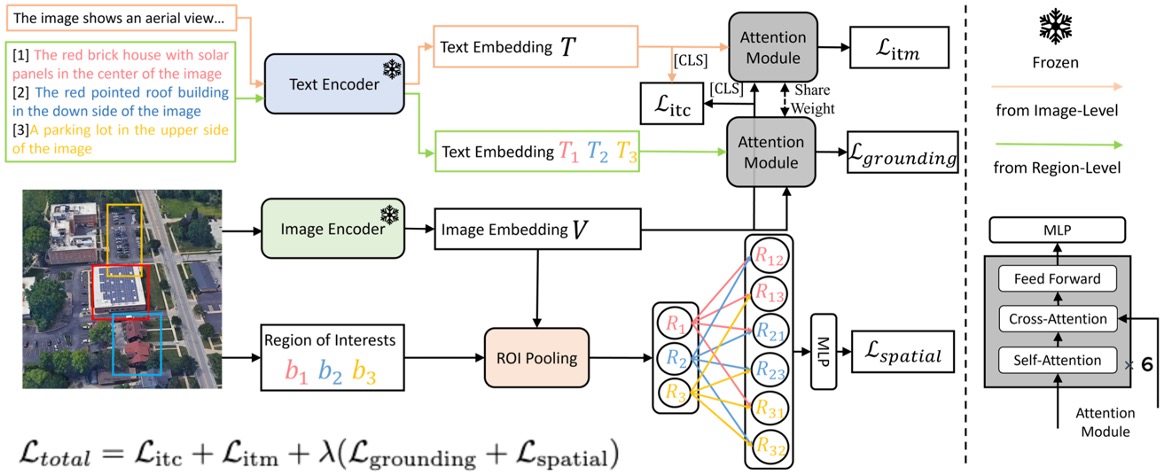

Towards Natural Language-Guided Drones: GeoText-1652 Benchmark with Spatial Relation Matching

Meng Chu, Zhedong Zheng, Wenhao Ji, Tao Wang, Tat-Seng Chua

- Drone-view Benchmark - Image-text-bounding box for vision-language.

- Spatial Relation Matching - Outperforming ALBEF and X-VLM.

- 31.2% Recall@10 - Significant improvement over baselines.

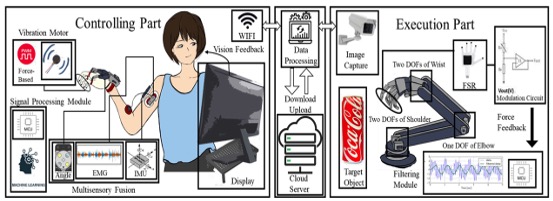

Multisensory Fusion, Haptic and Visual Feedback Based Teleoperation System Under IoT Framework

Meng Chu, Zhi Cui, Ailing Zhang, Jialei Yao, Chenyu Tang, Zhe Fu, Arokia Nathan, Shuo Gao

- Impact Factor 8.2 - Published in IEEE Internet of Things Journal.

- Multisensory Fusion - Haptic and visual feedback integration.

- IoT Framework - Teleoperation system design.

🚀 Open-Source Projects

Capybara: A Unified Visual Creation Model

Meng Chu - Main contributor

- Unified Visual Creation - One framework for image and video generation/editing.

- Multi-Task Support - T2I, T2V, TI2I, and TV2V in one system.

- Open-Source Release - Core contributor to the public inference framework.

💼 Experience

- 2024.09 - 2025.04: Research Intern @ OpenGVLab, Shanghai AI Laboratory

- Mentors: Dr. Yi Wang, Prof. Limin Wang

- Worked on VRBench for multi-step reasoning in long narrative videos.

- 2022.12 - 2024.07: Research Intern @ NeXT++ Research Center, National University of Singapore

- Mentors: Dr. Zhedong Zheng, Dr. Yicong Li

- Developed GeoText-1652 benchmark and GraphVideoAgent.

🎓 Education

- 2024.09 - Present: Ph.D. in Computer Science and Engineering

- Hong Kong University of Science and Technology (HKUST)

- Advisor: Prof. Jiaya Jia

- 2022.08 - 2024.06: M.Comp. in Artificial Intelligence (Distinction)

- National University of Singapore (NUS)

- GPA: 4.27/5.0, Dean’s List Fall 2023

- Advisors: Prof. Tat-Seng Chua, Prof. Zhedong Zheng

- 2018.08 - 2022.06: B.Eng. in Instrumentation and Optoelectronic Engineering

- Beihang University (BUAA)

- GPA: 3.6/4.0, Top 0.3% in Gaokao

🎖 Honors and Awards

- 2023 Dean’s List, National University of Singapore

- 2024 M.Comp. with Distinction, National University of Singapore

- 2018 Top 0.3% in National College Entrance Examination (Gaokao)